How an APAC non-bank lender discovered that fraud was sitting in its default book, miscategorised as bad credit. Four deepfakes confirmed. Zero flagged by their incumbent.

A digital non-bank lender (personal loans, credit cards, online onboarding, sub-60-minute funding SLA, approximately 10,000 identity checks per month) sent us five recent applications their fraud team suspected were synthetic. Their incumbent IDV provider, delivered through a credit bureau partnership, had cleared all five. We flagged the four deepfakes and confirmed the genuine one.

That result was the start of a different conversation. Not about a new threat, but about losses already on the lender's book that had been miscategorised as bad credit decisions rather than what they actually were.

Three checks. Different questions. Different answers.

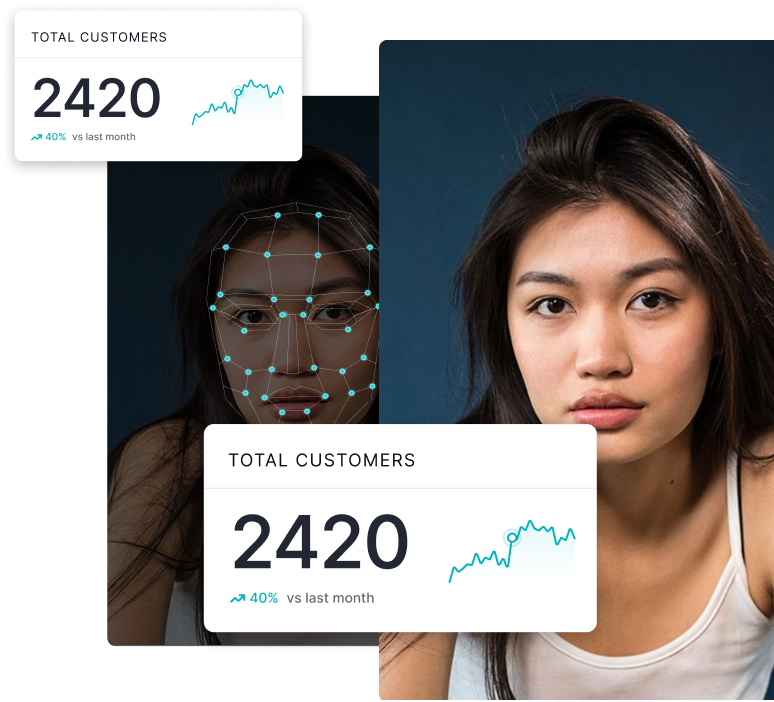

Three checks happen in every onboarding video that look similar but answer different questions. Conflating them is the most common reason deepfakes pass production identity verification today.

Layer 1: Liveness / PAD

Is there a real human in front of the camera, or a printed photo, screen replay, or 3D mask? This is what IDV platforms are built for and do well.

Layer 2: Injection detection

Is the video stream being fed into the camera API by software, bypassing the physical camera? Some liveness vendors have added this. Some claim it.

Layer 3: Deepfake content detection

Even with a real human and a genuine camera, is the face being shown real or synthetic? This is the specialist layer. This is what DuckDuckGoose AI does.

A deepfake can pass liveness and pass injection detection at the same time. The person is real. The camera is genuine. The stream is not injected. But the face being captured is synthetic. PAD and injection detection look at the delivery mechanism. Specialist deepfake detection looks at the content itself. The two systems do not duplicate one another. They cover different attack surfaces, which is why they sit beside one another, not against one another.

"Our incumbent says they cover this. They are updating their tool."

The lender's first instinct was that their incumbent would close the gap in the next release. Two things changed that calculation.

Cadence, not capability: Generative models evolve faster than enterprise vendor release cycles. By the time the incumbent's synthetic identity module ships, the attack vectors it was designed against will have moved on. This is not a vulnerability that gets patched once. It is a moving target that requires continuous retraining against attacks that did not exist three months ago. A specialist with a dedicated R&D pipeline can operate at that cadence. A platform with seven product lines and a quarterly release schedule cannot.

Specialisation, not headcount: A large IDV platform has more engineers than DuckDuckGoose does. It also has six or seven product lines, each with its own roadmap and resourcing. The number of engineers whose full-time work is keeping pace with the latest generative models is a small team within the platform. DuckDuckGoose AI's entire R&D function does that and only that. The relevant comparison is not total headcount. It is focused R&D capacity against a moving threat.

How this proceeds

A specialist control of this kind is not signed off in a single decision. What an executive sponsor authorises today is the first stage only, and every subsequent decision has its own gate.

Stage 01: Structured benchmark

Blind sample, properly designed, against production traffic. Criteria defined by the lender. 2 to 4 weeks. No commercial commitment.

Stage 02: Paid pilot

Defined slice of onboarding traffic. Real production data on flag rates, review workflow, integration stability. 3 to 6 months. Clear exit ramp.

Stage 03: Production deployment

Full onboarding flow, calibrated thresholds, integrated review workflow. Reviewed quarterly against the attack landscape and retraining cadence.

.jpg)