Introduction

Identity verification has a structural problem. The layers that most organisations rely on (document checks, database lookups, liveness detection) each do their job well. But collectively, they share a common blind spot: they were designed for a world where the face on the screen belongs to the person holding the phone. Today, that assumption is no longer safe.

Deepfake attacks have shifted from novelty to operational threat. Industry research covering European financial institutions found that deepfakes now account for 6.5% of all fraud attempts, up from less than 1% in 2021, representing a 2,137% increase over three years, as reported by FF News in February 2025. The pattern underneath that number matters more than the headline: the attacks are not replacing other fraud vectors. They are exploiting the gaps between layers that were never designed to communicate with each other.

This is the conversation that the identity verification industry needs to have. Deepfake detection is not a replacement for liveness checks or document verification. It is not a competitor to KYC orchestration. It is a specific capability that addresses a specific class of threat, and understanding exactly where it fits in the stack is the first step toward deploying it effectively.

Key Takeaways

- Liveness detection confirms a real human is present. It does not confirm the face is genuine. Those are different questions requiring different capabilities.

- Injection attacks route deepfake video through virtual camera software, bypassing liveness entirely. This is the primary attack vector targeting IDV systems today.

- Deepfake detection is a media forensics layer that sits downstream of liveness, analysing the same frames already captured for AI-generated artefacts.

- 42% of organisations rely primarily on liveness detection for deepfake protection, despite liveness having no mechanism to catch injection attacks.

- Explainability is not optional in regulated environments. Every flag needs an audit trail that compliance teams, fraud investigators, and regulators can act on.

- The threat is not limited to financial services. Any remote verification process that uses a face is an attack surface.

The IDV stack as it usually exists

A mature identity verification stack typically runs in sequence. A user submits a government-issued document, which goes through optical character recognition and authenticity checks. They complete a selfie or a video interaction, which a liveness engine analyses for signs of physical presence. Their data is cross-referenced against watchlists, sanctions registers, and credit databases. The output is a risk score or a pass/fail decision, and the user moves through to onboarding.

Each layer handles something distinct. Document verification catches altered or fabricated IDs. Database screening catches known bad actors. Liveness detection catches someone holding up a photo or wearing a mask. The logic is layered and, against the fraud landscape of five years ago, it was largely sufficient.

The stack's fundamental weakness is that liveness detection was never designed to catch AI-generated synthetic video. It was designed to confirm that a real, live person is present. Those are not the same thing. When a fraudster routes a deepfake video stream through a virtual camera application and presents it to a liveness system, the system sees a face moving naturally, blinking, responding to prompts. It sees exactly what it was built to look for. And it passes the attempt.

In late 2024, researchers demonstrated that widely available face-swapping tools combined with virtual camera software could successfully bypass active liveness checks on financial services applications. The attack required no specialist skills, no custom hardware, and no access to proprietary systems. It required a desktop application, publicly available facial images, and a few minutes of preparation.

What liveness detection actually detects

To understand where deepfake detection fits, it helps to be precise about what liveness detection does and does not do.

Liveness detection is designed to answer one question: is there a real human present at the camera? It looks for physiological signals: micro-movements, pulse signals derived from skin tone variation, the natural randomness in how people respond to prompts. It is highly effective at catching presentation attacks: printed photographs, video replays of a real person, silicone masks. These are physical spoofing attempts, and liveness detection was purpose-built for them.

What liveness detection is not designed to do is evaluate whether the face it is seeing belongs to a real person at all. A deepfake video, rendered convincingly in real time and streamed through a virtual camera, can contain all the physiological signals a liveness system looks for. The face moves. The skin responds. The eyes track. The system, operating exactly as intended, concludes that a live human is present. It is right. There is a live human present. But the face belongs to someone else entirely.

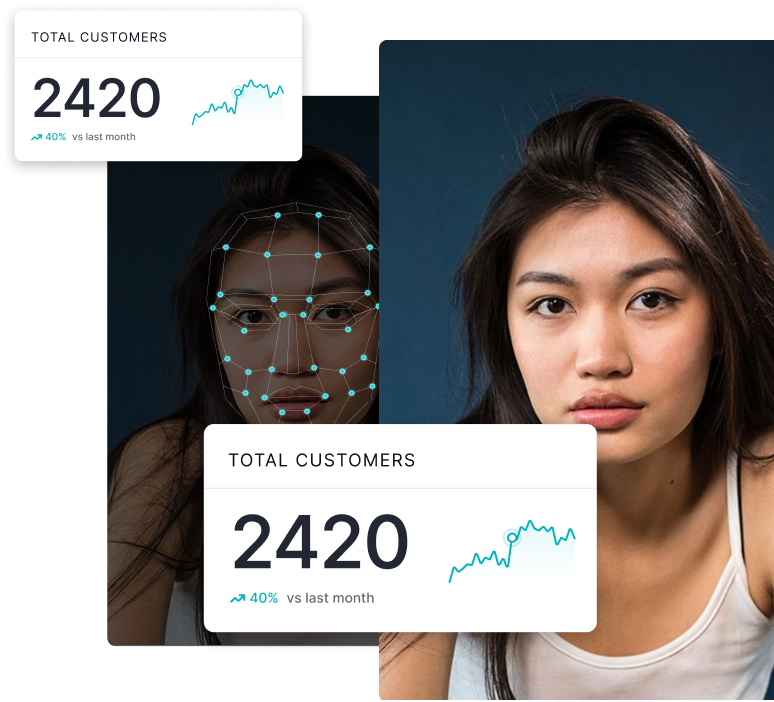

This is why the two capabilities are complementary rather than redundant. A poll conducted at Biometric Update's 2025 deepfake detection webinar found that 42% of organisations rely primarily on liveness detection or presentation attack detection for deepfake protection, despite the fact that liveness systems do not address injection attacks at all. That gap between what organisations believe their stack does and what it actually does is precisely where fraud enters.

The injection attack problem

The threat vector that has reshaped this conversation is the injection attack. Rather than presenting a physical spoof to a real camera, attackers intercept the video stream itself before it reaches the verification system. A deepfake video, either pre-rendered or generated in real time, is injected into the software pipeline as if it were a genuine camera feed.

Injection attacks targeting mobile web applications surged dramatically in 2024, with virtual camera exploits spiking sharply year on year, according to threat intelligence reporting covered by The Register in March 2025. The attack ecosystem has become commoditised: purpose-built deepfake-as-a-service tools are available for as little as $10 to $50, and ready-to-use synthetic identities sell for under $15 on criminal marketplaces, according to a January 2026 analysis by Biometric Update.

The industrialisation of this capability is what makes the scale alarming. Between January and August 2025 alone, one financial institution recorded more than 8,000 attempts to bypass its liveness checks for KYC loan applications using AI-generated deepfake images, according to the same investigation. These were not one-off experiments. They were systematic, iterative attacks against a specific vulnerability in the IDV pipeline.

Liveness detection, even excellent liveness detection, has no mechanism to identify that the feed it is analysing originated from software rather than a camera. That is a structural constraint, not a quality failure.

Addressing injection attacks requires a separate analytical layer operating on the content of the media itself. That is exactly what deepfake detection is designed to provide.

Where deepfake detection belongs in the stack

Deepfake detection is a media forensics capability. It analyses the visual content of an image or video frame for the artefacts, inconsistencies, and statistical signatures that generative AI introduces during synthesis. GAN-generated faces exhibit specific frequency patterns invisible to human perception. Face-swap outputs carry boundary artefacts at hairlines and skin edges. AI-rendered textures fail to replicate the random, organic variation of natural skin at a pixel level. A dedicated deepfake detection model trained on these signals can identify synthetic media with high confidence, operating on the same frames that a liveness system just declared authentic.

This means deepfake detection sits downstream of liveness in the stack, not in parallel with it. The appropriate architecture has liveness detection confirming that a live person is present, and deepfake detection confirming that the face being presented is genuine and not AI-synthesised. Together, they answer two different questions that a complete IDV system needs to answer.

The position in the stack also has implications for when deepfake detection needs to run. At onboarding, the risk is highest because identity is being established for the first time. But the threat does not stop there. Video KYC re-verification, high-value transaction authentication, and any remote interaction where a face is used to confirm identity are all potential attack surfaces. A deepfake detection capability that only runs at onboarding is covering one door while leaving others open.

Regula's 2025 identity fraud research found that in 2024, half of businesses surveyed had experienced at least one audio or video deepfake attack. In the financial sector, the exposure was higher. The pattern is consistent: attacks target the moments of human-facing verification, which now occur throughout the customer lifecycle, not only at initial sign-up.

The explainability requirement

There is a dimension to deepfake detection in an IDV context that purely technical discussions tend to underemphasise: the need to explain decisions.

When a deepfake detection model flags a verification attempt, that decision needs to be defensible. Regulatory frameworks governing identity verification in financial services, insurance, and government require that automated decisions with material consequences can be reviewed, audited, and appealed. A black-box signal that outputs a confidence score is not sufficient for compliance teams, fraud investigators, or regulators to act on.

Explainable AI architecture in deepfake detection means the system can identify and communicate which specific features of the media prompted its decision: the boundary inconsistency at the jawline, the frequency anomaly in the skin texture, the compression artefact pattern inconsistent with the claimed camera source. This audit trail is not an optional extra in a regulated environment. It is a prerequisite for integrating deepfake detection into workflows where human review needs to take over from automated screening.

NIST SP 800-63-4, published in July 2025, formalised the digital identity risk management framework that underpins how assurance levels are determined in identity proofing. The emphasis on remote proofing standards and the documentation requirements that come with them make explainability a compliance concern, not just an operational preference.

Integration: what a well-designed stack looks like

A deepfake detection IDV integration that works in production has a few consistent characteristics.

It operates on the same media already captured by the verification workflow. A solution that requires a separate video submission or a different capture interface creates friction and fails to scale. Effective integration means analysing the frames that document verification and liveness detection already process, without introducing additional steps for the user.

It runs in near-real time. The commercial constraint in identity verification is onboarding conversion. Any capability that meaningfully slows the verification journey will be disabled by operations teams long before fraud teams can demonstrate its value. Deepfake detection needs to operate at the speed of the existing pipeline.

It produces outputs that the orchestration layer can act on. This means structured signals: confidence levels, specific anomaly indicators, and flags that route edge cases to human review rather than automatically rejecting them. Pass/fail alone is not enough. The customer experience matters as much as the security outcome.

It is maintained against evolving attack techniques. The generative AI landscape is not static. New architectures, new training approaches, and new attack tooling appear continuously. A deepfake detection model trained against the threat landscape of 2022 will not perform adequately against the attacks of 2025. Active model development and threat monitoring are not product differentiators. They are baseline requirements.

The sector question

Deepfake detection in identity verification is sometimes framed as a financial services problem. The data on attack frequency does concentrate heavily in finance, crypto, and fintech. But the attack vector is method-agnostic and the dependency on remote identity verification is expanding across sectors.

Government digital identity programmes, remote insurance underwriting, legal entity verification for corporate onboarding, and healthcare patient identification all rely on biometric verification to establish identity remotely. As eIDAS 2.0 accelerates digital identity adoption across the European Union and national digital identity frameworks mature in markets from Singapore to Australia, the attack surface is expanding with the adoption curve.

Acuity Market Intelligence projects that government services alone will execute more than 6.4 trillion identity transactions and spend in excess of $200 billion on biometrics and digital identity solutions between 2024 and 2028. The deepfake threat does not require a bank account to be relevant. It requires any remote verification process that uses a face.

A layer that earns its place

The question identity verification architects face is not whether to include deepfake detection in their stack. The question is how to integrate it so that it adds genuine signal without adding friction, and how to ensure it complements rather than duplicates the capabilities already in place.

That requires being clear about what each layer does. Document verification cannot detect a synthetic face. Database screening cannot detect a deepfake video. Liveness detection cannot identify an AI-generated face when it moves convincingly. Deepfake detection addresses the specific failure mode that all of those layers share in common: the assumption that the face in the frame is real.

When that assumption holds, the existing stack works. When it does not, deepfake detection is the only layer equipped to catch the failure. That is not a minor gap. It is the gap that organised fraud operations are walking through at scale, today.

At DuckDuckGoose AI, we build explainable deepfake detection that integrates directly into identity verification workflows without additional capture steps or user friction. Our models are developed for production environments where compliance, speed, and audit trail requirements are non-negotiable. If you are evaluating where deepfake detection fits in your IDV stack, we are glad to walk through the architecture with you.

See Where Your Stack Is Exposed

We can walk through your current IDV architecture and show exactly where deepfake detection adds signal without adding friction.

.png)

.webp)

.png)