Introduction

Synthetic identity fraud is not a stolen identity. It is a constructed one. Fraudsters combine a real Social Security Number with a fabricated name, date of birth, and address to create a person who exists entirely on paper and passes document checks, KYC, and biometric liveness verification with alarming consistency. This blog breaks down how those identities are built, why every layer of verification falls short, and what the growing case record from Amsterdam to Southeast Asia reveals about the direction of the threat.

Key Takeaways

- Synthetic identities are built, not stolen: a real SSN combined with fabricated details creates a person who exists only in financial systems, with no victim to trigger an alert.

- The credit bureau creates the vulnerability it is meant to prevent: a denied application automatically generates a credit file, which is the fraudster's actual objective.

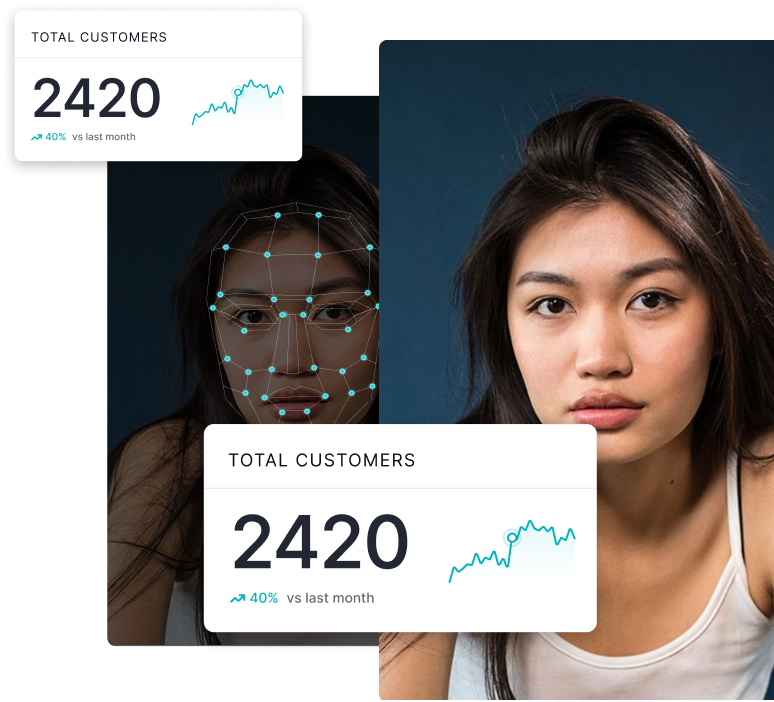

- Biometric liveness checks fail because deepfake face-swaps preserve genuine biological signals while replacing only the identity. The liveness signal is real. The person is not.

- In December 2025, a single individual in Amsterdam opened 46 bank accounts across multiple institutions using AI face-swap tools and passport copies obtained through a rental housing scam.

- The detection gap is structural: verification layers check whether data is valid in isolation, not whether it belongs to a real human being. Closing it requires reasoning across signals simultaneously, with full explainability.

There is no victim to call their bank. No disputed charge on a statement, no police report, no alert triggered. The loss lands on a balance sheet months later, classified as a credit write-off, and is never attributed to fraud. This structural invisibility is not incidental to synthetic identity fraud. It is the whole point.

A synthetic identity is not a stolen one. It is assembled. A real Social Security Number, sourced from someone with no reason to monitor their credit, gets paired with a fabricated name, date of birth, and address. The result is a person who exists entirely on paper, passing document verification, KYC checks, and increasingly biometric liveness detection with a consistency that points to a fundamental flaw in how identity is verified today.

The scale is no longer theoretical. TransUnion's mid-2025 fraud report placed U.S. lender exposure to suspected synthetic identities at $3.3 billion, with their incidence in bankcard credit inquiries exceeding 1% for the first time. In the UK, a 60% year-on-year rise in false identity cases pushed synthetic fraud to nearly a third of all detected identity fraud, with only 25% of financial services companies confident they can address it. Across the EU, the EBA and ECB's joint 2025 payment fraud report recorded total fraud losses of €4.2 billion in 2024, up 17% in a single year, with new fraud typologies consistently outpacing the authentication controls built to stop them.

The crime is structurally designed to be invisible. Losses land as credit defaults, not fraud. There is no victim to raise the alarm.

The construction of a person who never existed

Understanding why synthetic identities pass verification requires understanding how they are built. The process is methodical and patient, designed to exploit the gap between systems that were never intended to cross-reference each other.

The foundation is always a real Social Security Number: genuine, belonging to someone with no reason to check their credit. Children are the most targeted group. Carnegie Mellon University's CyLab found children's SSNs are 51 times more likely to be used in synthetic fraud than adults', precisely because there is no monitoring in place and the exploitation window spans years. A 2011 SSA decision to randomise SSN issuance, while well-intentioned, inadvertently removed one of the few ways lenders could spot structurally implausible numbers. A randomly issued SSN now looks identical to a fabricated one.

Once the SSN is paired with invented details, the fraudster applies for credit and is denied. That denial is the objective. The rejected application prompts the credit bureau to create a file for this new entity. The synthetic identity is born into the financial system through the very mechanism designed to document legitimate borrowers.

Over the following months, the identity is cultivated: added as an authorised user on an existing account with strong payment history, transferring years of positive behaviour to a person who does not exist. Small credit lines are obtained and repaid. A credit score is built. Then, at a moment of maximum exposure, every available line is drawn down simultaneously. The fraudster vanishes. According to Juniper Research, the average bust-out yields between $81,000 and $98,000 per synthetic identity, with losses absorbed as credit defaults and never flagged as fraud.

Why every layer of verification falls short

Traditional identity verification was designed to check whether data points are valid in isolation, not whether they coherently belong to a real human being. No single database cross-references name, SSN, date of birth, and address simultaneously. Synthetic identities exploit exactly that gap.

Document verification catches obvious forgeries and structural anomalies. It cannot reliably detect documents that are structurally correct but digitally altered. The ACFE's 2025 fraud trend review found digital injection attacks, where AI-generated media is fed directly into verification pipelines at the software level, became a defining fraud trend in 2025. Fraudsters bypass the camera entirely, inserting synthetic facial data before it reaches the liveness engine. Active liveness checks are the most vulnerable: the fraudster genuinely blinks, turns, or smiles, while a face-swap tool maps the target identity onto those authentic biological movements. The liveness signal is real. Only the identity is not.

Voice biometrics offered no reliable fallback. A University of Waterloo researcher demonstrated bypassing a major financial institution's voice authentication system with a 99% success rate after six attempts using commercially available tools. A 2025 technique called FOICE goes further still, generating convincing synthetic speech from a single photograph with no prior voice recordings required at all.

The combined effect is a verification stack that checks multiple signals independently but never confirms they belong to the same living person.

Verification systems check whether data is valid. They were not built to verify that it belongs to a human being who actually exists.

What recent cases reveal about the operational playbook

Cases from 2024 and 2025 across multiple jurisdictions show a consistent pattern, which is itself revealing: the underlying vulnerability is structural, not regional.

In Amsterdam, December 2025: a 34-year-old man was arrested after opening 46 bank accounts across multiple financial institutions using deepfake technology. His method required no sophisticated infrastructure. He approached people searching for rental housing, collected copies of their passports, and used AI to overlay their facial features onto his own appearance during biometric selfie checks. He passed liveness verification at bank after bank and gained full account access each time. He was caught only after one institution flagged suspicious activity, and police intercepted him at the border carrying dozens of bank cards. Dutch police reporting on the case noted it as a serious warning about what commercially available AI tools now make routine.

In Toronto, police arrested 12 people in a large-scale synthetic identity credit fraud ring after a financial institution flagged patterns across multiple accounts that should not have been connected. No single account raised an alarm on its own. It was the cross-account behavioural signature that ultimately exposed the operation.

In the United States, one documented case involved approximately 700 synthetic identities built on SSNs sourced from incarcerated individuals, patiently cultivated until COVID relief programmes launched. Pre-established credit histories made the identities immediately eligible for SBA loans. The operation yielded $24 million in fraudulent claims before prosecution. The infrastructure was built before the opportunity arrived, which is the defining characteristic of well-run synthetic identity operations.

In Southeast Asia, a criminal ring laundered approximately $38 million through accounts opened using AI-generated facial scans derived from short videos of recruited participants. The technique combined genuine liveness signals with constructed identities, a hybrid approach that defeated both liveness detection and document matching simultaneously.

Three dimensions of the problem rarely discussed

Children carry the exposure for years without knowing it. A fraudster with a child's SSN has roughly a decade before that child turns 18 and applies for credit for the first time. Documented cases involve young adults discovering significant debt attached to their SSN before they have ever applied for anything. There are no widespread mechanisms for proactively monitoring minors' credit files. Better onboarding KYC does not close this gap.

The credit bureau creates the vulnerability it is meant to prevent. Automatic credit file creation upon a denied application is not a flaw that can be patched. It is how credit reporting was architecturally designed. A GAO audit in September 2024 found the SSA's electronic consent-based SSN verification service, built specifically to address this gap, had spent $62 million, recovered $25 million in fees, and had minimal financial institution participation. The structural fix exists and is simply not being used.

Generative AI has reduced the entry cost to near zero. Europol's EU Serious and Organised Crime Threat Assessment 2025 found criminal networks deploying AI-powered voice cloning and real-time video deepfakes that require no meaningful technical skill to operate. The Amsterdam arrest involved one person and commercially available software. That is not an exceptional case. It is the direction of travel.

The detection gap has a structural answer

Most institutional responses to synthetic identity fraud involve adding layers to existing verification: stronger liveness challenges, more database checks, better document scanning. Each addition is individually rational. Collectively, they still do not answer the question that matters: is this a real person?

Gartner projected in early 2024 that by 2026, nearly a third of enterprises will consider identity verification solutions unreliable in isolation due to AI-generated deepfakes. That prediction is tracking ahead of schedule. The failure mode is not that any individual layer is inadequate on its own terms. It is that layers are evaluated independently when the threat is specifically engineered to pass them independently.

Synthetic identities behave like good customers. They pay back small loans, avoid unusual transactions, and produce clean verification histories. The signal that something is wrong is not anomalous behaviour. It is a coherent digital history that does not correspond to a coherent human existence. Detecting that requires reasoning across multiple signals simultaneously, with explainability built in: the ability to show precisely why a decision was made, which signals diverged, and what pattern that divergence indicates.

That is the design philosophy behind DuckDuckGoose's detection architecture. Our explainable AI approach does not return a binary pass or fail. It surfaces the specific signals indicating synthetic or deepfake-assisted identity in a form compliance and fraud teams can review, interrogate, and act on. In a fraud category where the crime is structurally designed to look like nothing at all, knowing exactly what you are looking at is not a feature. It is the entire defence.

Is a Synthetic Identity in Your Onboarding Queue?

DuckDuckGoose detects deepfake-assisted identity fraud with explainable AI that shows exactly which signals flagged and why, giving your compliance team the audit trail to act on every decision.

.png)

.png)